14.2 Understanding Search

Learning Objectives

After studying this section you should be able to do the following:

- Understand the mechanics of search, including how Google indexes the Web and ranks its organic search results.

- Examine the infrastructure that powers Google and how its scale and complexity offer key competitive advantages.

Before diving into how the firm makes money, let’s first understand how Google’s core service, search, works.

Perform a search (or query) on Google or another search engine, and the results you’ll see are referred to by industry professionals as organic or natural search. Search engines use different algorithms for determining the order of organic search results, but at Google the method is called PageRank (a bit of a play on words, it ranks Web pages, and was initially developed by Google cofounder Larry Page). Google does not accept money for placement of links in organic search results. Instead, PageRank results are a kind of popularity contest. Web pages that have more pages linking to them are ranked higher.

The process of improving a page’s organic search results is often referred to as search engine optimization (SEO). SEO has become a critical function for many marketing organizations since if a firm’s pages aren’t near the top of search results, customers may never discover its site.

Google is a bit vague about the specifics of precisely how PageRank has been refined, in part because many have tried to game the system. In addition to in-bound links, Google’s organic search results also consider some two hundred other signals, and the firm’s search quality team is relentlessly analyzing user behavior for clues on how to tweak the system to improve accuracy (Levy, 2010). The less scrupulous have tried creating a series of bogus Web sites, all linking back to the pages they’re trying to promote (this is called link fraud, and Google actively works to uncover and shut down such efforts). We do know that links from some Web sites carry more weight than others. For example, links from Web sites that Google deems as “influential,” and links from most “.edu” Web sites, have greater weight in PageRank calculations than links from run-of-the-mill “.com” sites.

Spiders and Bots and Crawlers—Oh My!

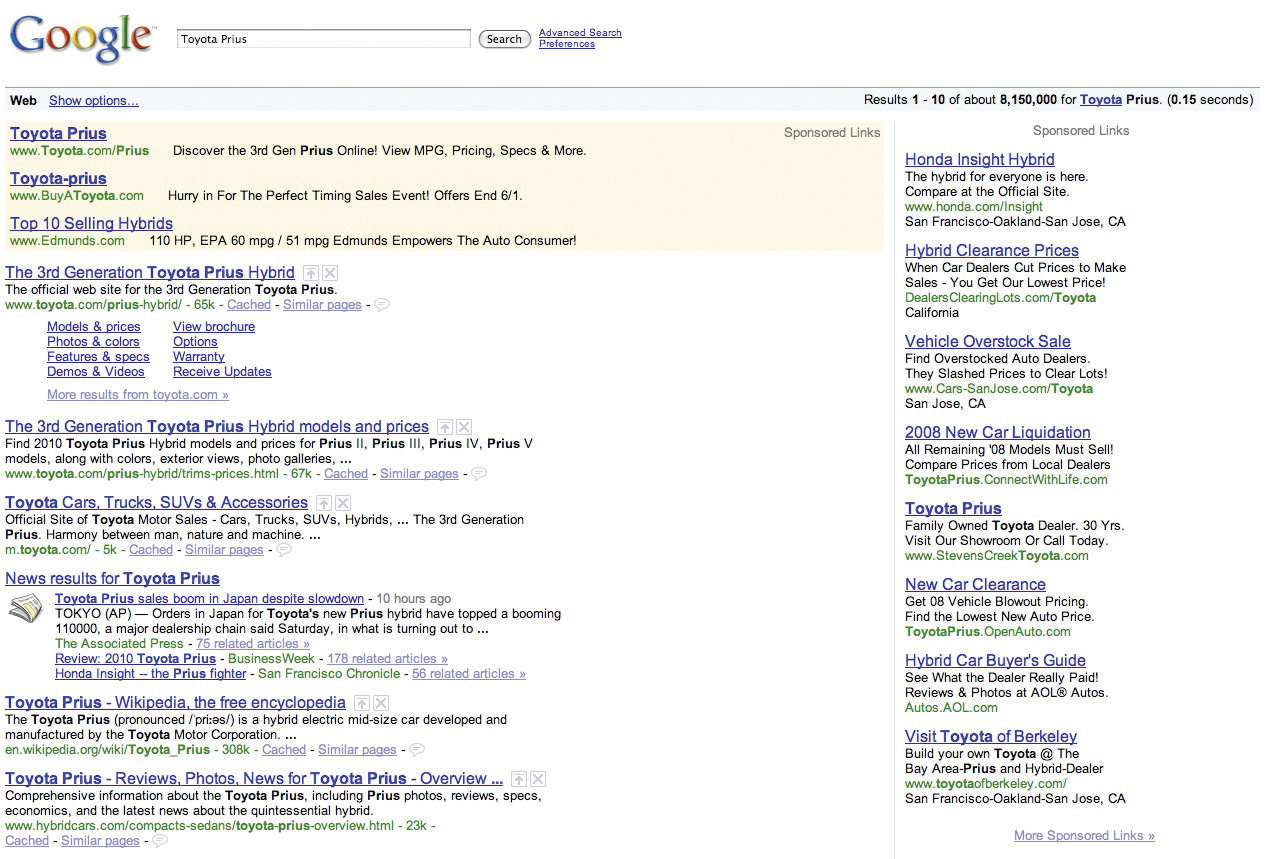

When performing a search via Google or another search engine, you’re not actually searching the Web. What really happens is that the major search engines make what amounts to a copy of the Web, storing and indexing the text of online documents on their own computers. Google’s index considers over one trillion URLs (Wright, 2009). The upper right-hand corner of a Google query shows you just how fast a search can take place (in the example above, rankings from over eight million results containing the term “Toyota Prius” were delivered in less than two tenths of a second).

To create these massive indexes, search firms use software to crawl the Web and uncover as much information as they can find. This software is referred to by several different names—software robots, spiders, Web crawlers—but they all pretty much work the same way. In order to make its Web sites visible, every online firm provides a list of all of the public, named servers on its network, known as domain name service (DNS) listings. For example, Yahoo! has different servers that can be found at http://www.yahoo.com, sports.yahoo.com, weather.yahoo.com, finance.yahoo.com, and so on. Spiders start at the first page on every public server and follow every available link, traversing a Web site until all pages are uncovered.

Google will crawl frequently updated sites, like those run by news organizations, as often as several times an hour. Rarely updated, less popular sites might only be reindexed every few days. The method used to crawl the Web also means that if a Web site isn’t the first page on a public server, or isn’t linked to from another public page, then it’ll never be found1. Also note that each search engine also offers a page where you can submit your Web site for indexing.

While search engines show you what they’ve found on their copy of the Web’s contents; clicking a search result will direct you to the actual Web site, not the copy. But sometimes you’ll click a result only to find that the Web site doesn’t match what the search engine found. This happens if a Web site was updated before your search engine had a chance to reindex the changes. In most cases you can still pull up the search engine’s copy of the page. Just click the “Cached” link below the result (the term cache refers to a temporary storage space used to speed computing tasks).

But what if you want the content on your Web site to remain off limits to search engine indexing and caching? Organizations have created a set of standards to stop the spider crawl, and all commercial search engines have agreed to respect these standards. One way is to put a line of HTML code invisibly embedded in a Web site that tells all software robots to stop indexing a page, stop following links on the page, or stop offering old page archives in a cache. Users don’t see this code, but commercial Web crawlers do. For those familiar with HTML code (the language used to describe a Web site), the command to stop Web crawlers from indexing a page, following links, and listing archives of cached pages looks like this:

〈META NAME=“ROBOTS” CONTENT=“NOINDEX, NOFOLLOW, NOARCHIVE”〉

There are other techniques to keep the spiders out, too. Web site administrators can add a special file (called robots.txt) that provides similar instructions on how indexing software should treat the Web site. And a lot of content lies inside the “dark Web,” either behind corporate firewalls or inaccessible to those without a user account—think of private Facebook updates no one can see unless they’re your friend—all of that is out of Google’s reach.

What’s It Take to Run This Thing?

Sergey Brin and Larry Page started Google with just four scavenged computers (Liedtke, 2008). But in a decade, the infrastructure used to power the search sovereign has ballooned to the point where it is now the largest of its kind in the world (Carr, 2006). Google doesn’t disclose the number of servers it uses, but by some estimates, it runs over 1.4 million servers in over a dozen so-called server farms worldwide (Katz, 2009). In 2008, the firm spent $2.18 billion on capital expenditures, with data centers, servers, and networking equipment eating up the bulk of this cost2. Building massive server farms to index the ever-growing Web is now the cost of admission for any firm wanting to compete in the search market. This is clearly no longer a game for two graduate students working out of a garage.

Google’s Container Data Center

Take a virtual tour of one of Google’s data centers.

The size of this investment not only creates a barrier to entry, it influences industry profitability, with market-leader Google enjoying huge economies of scale. Firms may spend the same amount to build server farms, but if Google has nearly 70 percent of this market (and growing) while Microsoft’s search draws less than one-seventh the traffic, which do you think enjoys the better return on investment?

The hardware components that power Google aren’t particularly special. In most cases the firm uses the kind of Intel or AMD processors, low-end hard drives, and RAM chips that you’d find in a desktop PC. These components are housed in rack-mounted servers about 3.5 inches thick, with each server containing two processors, eight memory slots, and two hard drives.

In some cases, Google mounts racks of these servers inside standard-sized shipping containers, each with as many as 1,160 servers per box (Shankland, 2009). A given data center may have dozens of these server-filled containers all linked together. Redundancy is the name of the game. Google assumes individual components will regularly fail, but no single failure should interrupt the firm’s operations (making the setup what geeks call fault-tolerant). If something breaks, a technician can easily swap it out with a replacement.

Each server farm layout has also been carefully designed with an emphasis on lowering power consumption and cooling requirements. And the firm’s custom software (much of it built upon open source products) allows all this equipment to operate as the world’s largest grid computer.

Web search is a task particularly well suited for the massively parallel architecture used by Google and its rivals. For an analogy of how this works, imagine that working alone, you need try to find a particular phrase in a hundred-page document (that’s a one server effort). Next, imagine that you can distribute the task across five thousand people, giving each of them a separate sentence to scan (that’s the multi-server grid). This difference gives you a sense of how search firms use massive numbers of servers and the divide-and-conquer approach of grid computing to quickly find the needles you’re searching for within the Web’s haystack. (For more on grid computing, see Chapter 5 “Moore’s Law: Fast, Cheap Computing and What It Means for the Manager”, and for more on server farms, see Chapter 10 “Software in Flux: Partly Cloudy and Sometimes Free”.)

Google will even sell you a bit of its technology so that you can run your own little Google in-house without sharing documents with the rest of the world. Google’s line of search appliances are rack-mounted servers that can index documents within a corporation’s Web site, even specifying password and security access on a per-document basis. Selling hardware isn’t a large business for Google, and other vendors offer similar solutions, but search appliances can be vital tools for law firms, investment banks, and other document-rich organizations.

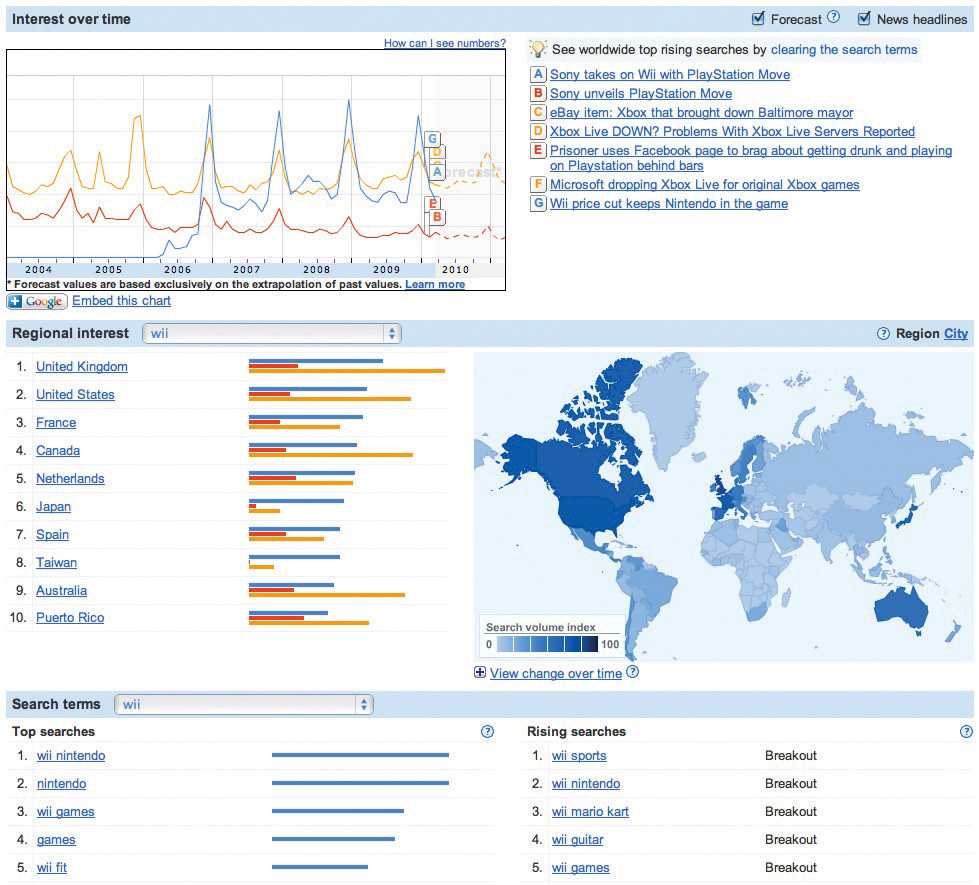

Trendspotting with Google

Google not only gives you search results, it lets you see aggregate trends in what its users are searching for, and this can yield powerful insights. For example, by tracking search trends for flu symptoms, Google’s Flu Trends Web site can pinpoint outbreaks one to two weeks faster than the Centers for Disease Control and Prevention (Bruce, 2009). Want to go beyond the flu? Google’s Trends, and Insights for Search services allow anyone to explore search trends, breaking out the analysis by region, category (image, news, product), date, and other criteria. Savvy managers can leverage these and similar tools for competitive analysis, comparing a firm, its brands, and its rivals.

Key Takeaways

- Ranked search results are often referred to as organic or natural search. PageRank is Google’s algorithm for ranking search results. PageRank orders organic search results based largely on the number of Web sites linking to them, and the “weight” of each page as measured by its “influence.”

- Search engine optimization (SEO) is the process of using natural or organic search to increase a Web site’s traffic volume and visitor quality. The scope and influence of search has made SEO an increasingly vital marketing function.

- Users don’t really search the Web; they search an archived copy built by crawling and indexing discoverable documents.

- Google operates from a massive network of server farms containing hundreds of thousands of servers built from standard, off-the-shelf items. The cost of the operation is a significant barrier to entry for competitors. Google’s share of search suggests the firm can realize economies of scales over rivals required to make similar investments while delivering fewer results (and hence ads).

- Web site owners can hide pages from popular search engine Web crawlers using a number of methods, including HTML tags, a no-index file, or ensuring that Web sites aren’t linked to other pages and haven’t been submitted to Web sites for indexing.

Questions and Exercises

- How do search engines discover pages on the Internet? What kind of capital commitment is necessary to go about doing this? How does this impact competitive dynamics in the industry?

- How does Google rank search results? Investigate and list some methods that an organization might use to improve its rank in Google’s organic search results. Are there techniques Google might not approve of? What risk does a firm run if Google or another search firm determines that it has used unscrupulous SEO techniques to try to unfairly influence ranking algorithms?

- Sometimes Web sites returned by major search engines don’t contain the words or phrases that initially brought you to the site. Why might this happen?

- What’s a cache? What other products or services have a cache?

- What can be done if you want the content on your Web site to remain off limits to search engine indexing and caching?

- What is a “search appliance?” Why might an organization choose such a product?

- Become a better searcher: Look at the advanced options for your favorite search engine. Are there options you hadn’t used previously? Be prepared to share what you learn during class discussion.

- Visit Google Trends and Google Insights for Search. Explore the tool as if you were comparing a firm with its competitors. What sorts of useful insights can you uncover? How might businesses use these tools?

1Most Web sites do have a link where you can submit a Web site for indexing, and doing so can help promote the discovery of your content.

2Google, “Google Announces Fourth Quarter and Fiscal Year 2008 Results,” press release, January 22, 2009.

References

Bruce, S., “Google Says User Data Aids Flu Detection,” eHealthInsider, May 25, 2009.

Carr, D. F., “How Google Works,” Baseline, July 6, 2006.

Katz, R., “Tech Titans Building Boom,” IEEE Spectrum 46, no. 2 (February 1, 2009).

Levy, S., “Inside the Box,” Wired, March 2010.

Liedtke, M., “Google Reigns as World’s Most Powerful 10-Year-Old,” Associated Press, September 5, 2008.

Shankland, S., “Google Unlocks Once-Secret Server,” CNET, April 1, 2009.

Wright, A., “Exploring a ‘Deep Web’ That Google Can’t Grasp,” New York Times, February 23, 2009.